In the dynamic landscape of software development, uncertainty is the only certainty. Traditional project management relied on extensive upfront planning to mitigate risk, often creating fragile baselines that crumbled under the weight of changing requirements. Agile methodologies shifted the focus to adaptability, yet this does not eliminate risk; it merely changes its nature. Understanding how to leverage delivery data to assess risk is critical for organizational stability and successful outcomes.

This guide explores the architecture of risk assessment models built upon Agile delivery data. We will examine the metrics that matter, the pitfalls of misinterpretation, and the structural integrity required to build a system that provides clarity rather than false confidence. The goal is not to predict the future with absolute precision, but to illuminate the path forward with enough visibility to make informed decisions.

The Limitations of Predictive Risk Models 🛑

Legacy risk management frameworks often depend on fixed parameters. They assume a linear progression where inputs equal outputs. In an Agile environment, requirements evolve, feedback loops shorten, and team dynamics fluctuate. A model built on static assumptions will inevitably fail to capture the true state of risk.

Several fundamental issues plague traditional approaches when applied to iterative delivery:

- False Certainty: Predictive models often present a single point estimate for delivery dates. This ignores the variance inherent in complex systems. A single date suggests a level of control that rarely exists.

- Lagging Indicators: Traditional risk registers are often updated quarterly or at milestone gates. By the time a risk is recorded, the damage is often already done. Agile data is continuous, requiring continuous assessment.

- Context Blindness: A raw number, such as a story point count, lacks context. Without understanding the team’s capacity, the complexity of the feature, or external dependencies, the data is meaningless.

- Human Factor: Risk is often behavioral. Fear of reporting bad news, over-optimism in estimation, or burnout are risks that cannot be captured by a simple metric without qualitative analysis.

To build a robust model, we must shift from predicting specific outcomes to monitoring health signals. The model should function as an early warning system, highlighting areas where the probability of failure increases, rather than declaring a fixed end date.

Foundations of Agile Risk Data 📂

Before constructing a model, one must define the data sources. Reliability is paramount. If the input data is flawed, the risk assessment will be misleading. This section outlines the primary data streams required for accurate analysis.

1. Work Item Data

The backbone of any assessment is the work itself. This includes user stories, tasks, and bugs. The data must capture the lifecycle of an item from creation to completion. Key attributes include:

- Creation Date: When was the work requested?

- Start Date: When did work actually begin?

- Completion Date: When did it reach the defined state of done?

- Priority: The perceived importance of the work.

2. Capacity and Velocity Data

Velocity is a measure of output, but in the context of risk, it represents stability. Consistent velocity suggests predictability. Highly volatile velocity indicates instability. This volatility is a leading indicator of schedule risk.

3. Cycle Time and Lead Time

Lead time measures the total time from request to delivery. Cycle time measures the active work duration. A widening gap between these two suggests waiting times, which often correlate with bottlenecks. Bottlenecks are significant sources of delivery risk.

4. Quality Metrics

Rework is a hidden risk. If a team builds a feature that is immediately rejected or requires patches, the effective velocity drops. Bug rates, escape defects, and code review turnaround times provide insight into technical debt and stability.

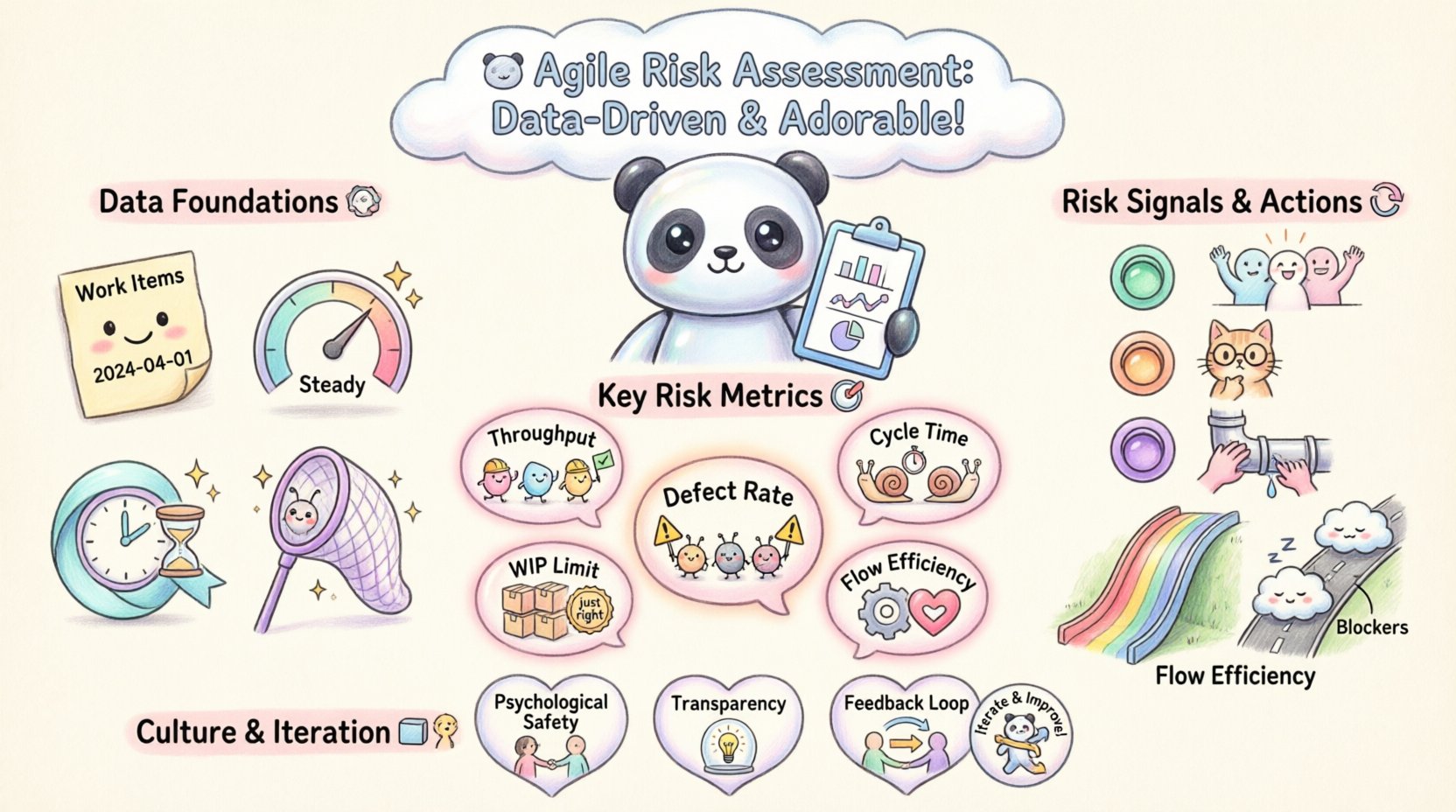

Key Metrics for Risk Evaluation 🎯

Selecting the right metrics is the most critical step in model design. Too many metrics create noise; too few create blind spots. The following table categorizes essential metrics and their specific risk implications.

| Category | Metric | Risk Indicator | Interpretation |

|---|---|---|---|

| Flow | Throughput | Volume Variance | Large swings in weekly output suggest instability in planning or capacity. |

| Flow | Cycle Time | Outliers | Items taking significantly longer than the median indicate process bottlenecks. |

| Quality | Defect Escape Rate | Backlog Growth | High escape rates suggest testing gaps, leading to future technical debt. |

| Planning | Commitment Reliability | Scope Creep | Frequent changes to committed scope indicate poor requirement definition. |

| Health | Work in Progress (WIP) | Context Switching | High WIP often correlates with slower throughput and increased stress. |

Each metric requires a baseline. You cannot determine if a cycle time of 10 days is risky without knowing the historical average for that specific team. The model must account for team maturity and the complexity of the domain.

Constructing the Assessment Framework 🔧

Once data is collected and metrics selected, the framework for assessment must be defined. This framework acts as the logic engine that processes raw data into risk signals. It should be transparent and reproducible.

Step 1: Establish Baselines

Before assessing risk, you must understand normal. Calculate the mean, median, and standard deviation for key metrics over a meaningful period (e.g., 6 to 12 weeks). This filters out one-off anomalies and establishes a pattern of behavior.

Step 2: Define Thresholds

Thresholds determine when a metric moves from “normal variance” to “risk signal.” These should not be arbitrary. For example, if the average cycle time is 5 days with a standard deviation of 1 day, a cycle time of 10 days is statistically significant. Setting thresholds based on standard deviations provides a scientific basis for flagging issues.

Step 3: Weighting Factors

Not all risks are equal. A delay in a backend API might be less critical than a delay in a customer-facing UI. Assign weights to different areas of the delivery pipeline. This allows the model to prioritize risks that impact the customer value chain most heavily.

Step 4: Visualization

The output of the model must be digestible. Dashboards should highlight trends rather than static numbers. Cumulative Flow Diagrams (CFDs) are particularly useful here, as they visually represent the accumulation of work in different stages. A widening band in the CFD indicates a growing backlog, which is a clear risk signal.

Interpreting Flow Efficiency 🔄

Flow is the lifeblood of Agile delivery. When flow is efficient, work moves smoothly from conception to production. When flow is blocked, risk increases exponentially. Analyzing flow efficiency requires looking at the system as a whole, not just individual team members.

The Waiting Time Ratio

One of the most telling metrics is the ratio of waiting time to active work time. In a healthy system, work is mostly being done. If work is mostly waiting (in a queue, awaiting approval, or blocked), the system is fragile. This waiting time creates a buffer that absorbs shock, but it also hides problems.

Blocker Analysis

Every item that stops work should be logged with a reason. Aggregating these reasons reveals systemic issues. Is the risk coming from external dependencies? Is it a lack of testing resources? Is it unclear requirements? Identifying the root cause of blockers allows for targeted mitigation rather than generic pressure.

Batch Size Impact

Large batch sizes increase risk. A feature comprising 50 stories carries more risk than a feature comprising 5 stories. If the larger batch fails, the loss is greater. The model should encourage smaller batches by measuring the correlation between batch size and cycle time. If large batches consistently result in delays, the model should flag high-risk work items for splitting.

Quality as a Risk Signal 🛡️

Speed without quality is a leading cause of project failure. In Agile, quality is not a phase; it is a continuous state. However, technical debt accumulates silently. The risk assessment model must include quality indicators that track the health of the codebase over time.

Defect Density

Measuring defects per unit of work (e.g., per story point or per hour) provides a normalized view of quality. A spike in defect density often precedes a drop in velocity. If a team releases code that is frequently buggy, they will eventually spend more time fixing bugs than building new features.

Test Coverage Trends

While test coverage percentage is a debated metric, the trend is valuable. A declining trend in automated test coverage indicates a growing risk of regression. If new features are added without corresponding tests, the fragility of the system increases.

Hotfix Frequency

How often does the team need to issue hotfixes to production? Frequent hotfixes indicate instability. This is a direct risk to customer trust and operational stability. The model should track the ratio of normal releases to hotfixes. A high ratio suggests that the delivery pipeline is not stable enough for production.

Cultural Factors in Risk Reporting 🗣️

Data does not exist in a vacuum. The culture of the organization heavily influences the accuracy of the data. If the environment penalizes bad news, the data will be manipulated to look better than reality. This is known as sandbagging or gaming the metrics.

Psychological Safety

Teams must feel safe reporting risks. If a team member admits they are behind schedule and is immediately criticized, they will hide the issue until it is too late. The risk model must be decoupled from performance management. It should be a tool for improvement, not a weapon for accountability.

Transparency

All data used for risk assessment should be visible to the entire organization. Hiding data creates silos of information where risks can fester. Transparency ensures that stakeholders understand the constraints and limitations of the delivery process.

Continuous Feedback

The model itself should be subject to feedback. If the risk indicators are consistently wrong, the model needs adjustment. This requires a culture of continuous improvement applied to the risk management process itself.

Iterating on the Model 🔄

An Agile risk assessment model is not a one-time setup. It requires ongoing refinement. The software landscape changes, team composition changes, and business priorities shift. A static model will eventually become obsolete.

Regular Calibration

Schedule regular reviews of the model’s accuracy. Are the thresholds still relevant? Are the metrics still capturing the right risks? Adjust the parameters based on new data and stakeholder feedback.

Emerging Patterns

Look for patterns that were not previously identified. Perhaps a specific type of integration work always carries high risk. Perhaps a specific time of year correlates with higher defect rates. Incorporate these emerging patterns into the weighting of the model.

Stakeholder Alignment

Ensure that stakeholders understand what the risk model is telling them. A high-risk score does not mean the project will fail; it means the probability of deviation from the plan is higher. Clear communication prevents panic and facilitates better decision-making.

Common Pitfalls to Avoid ⚠️

Even with a solid framework, there are common mistakes that can undermine the effectiveness of the risk assessment.

- Over-Engineering the Model: Building a complex algorithm that requires manual data entry is unsustainable. The model should be automated where possible to reduce friction.

- Ignoring Qualitative Data: Numbers tell only part of the story. Retrospective discussions and team sentiment analysis provide context that raw data cannot capture.

- Comparing Teams: Comparing the risk scores of different teams is often unfair. Teams work on different domains with different complexities. Focus on the trend within a single team over time.

- Reactive Mitigation: Do not wait for a risk to materialize before acting. The model should trigger preventive actions when signals appear, not just after the damage is done.

Integrating Stakeholder Feedback 🤝

The final piece of the puzzle is the integration of stakeholder feedback. While the model provides objective data, stakeholders provide subjective context. A feature might be technically on track, but if the business value is no longer relevant, the project is at risk.

Value Delivery

Risk is not just about delivery speed; it is about value realization. If a team delivers a feature perfectly but the market has moved on, the risk was in the planning phase. Stakeholder interviews should be used to validate that the work being done aligns with current business goals.

Expectation Management

The model should be used to manage expectations. If the risk score is high, stakeholders need to know early. This allows them to adjust their own plans, such as budgeting or marketing timelines, to accommodate the increased uncertainty.

Final Thoughts on Data-Driven Risk 🧭

Building a risk assessment model using Agile delivery data is an exercise in humility. It acknowledges that the future is uncertain and that we must navigate based on the best available signals. It moves the conversation from “Will we finish on time?” to “What are the probabilities, and how do we manage them?”

By focusing on flow, quality, and stability, organizations can reduce the anxiety associated with delivery. The data does not eliminate risk, but it makes it visible. When risk is visible, it can be managed. This visibility empowers teams to make better decisions, allocate resources more effectively, and ultimately deliver value with greater consistency.

Remember that the tool is secondary to the practice. A perfect model is useless if the team does not trust the data. Invest in building trust, transparency, and a culture where data is used to learn and improve, not to judge. This is the foundation of sustainable Agile delivery.