Reaching product market fit is often described as the holy grail for startups and product teams. It is the point where a product satisfies a strong market demand. However, finding this balance is rarely a straight line. It involves experimentation, learning, and adaptation. This is where rapid agile iterations become essential. By breaking development into small, manageable cycles, teams can test assumptions early and often. This approach minimizes waste and maximizes the chance of success.

In this guide, we will explore how to structure validation efforts within an agile framework. We will look at the metrics that matter, the types of feedback to gather, and the common traps to avoid. The goal is to build a sustainable product that users actually want, without burning through resources on features that do not resonate.

Understanding Product Market Fit in an Agile Context 🎯

Product market fit (PMF) is not a binary switch. It is a spectrum. You are either moving toward it or away from it. In a traditional waterfall model, teams might spend months building a full product before releasing it. By that time, the market needs may have shifted, or the initial assumptions may have proven false. Agile methodology flips this dynamic. It prioritizes working software over comprehensive documentation. It prioritizes customer collaboration over contract negotiation.

When validating PMF with agile, the focus shifts from “building everything” to “learning everything.” Each iteration serves as a hypothesis test. You propose a solution, you build a version of it, you release it to a subset of users, and you measure the results. If the data shows positive traction, you iterate further. If the data shows indifference, you pivot or adjust.

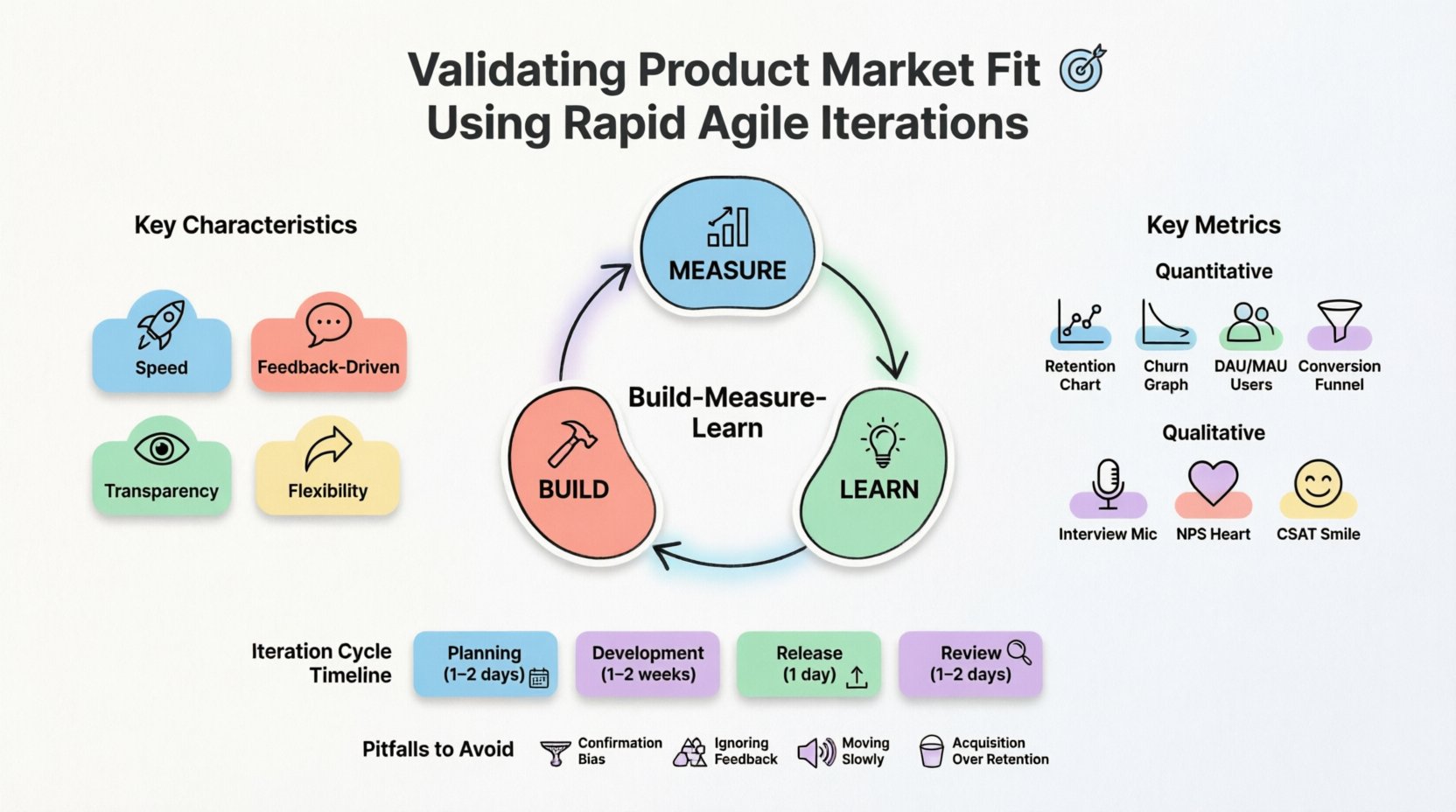

Key Characteristics of Agile PMF Validation

- Speed: Cycles are short, typically two to four weeks.

- Feedback-Driven: Decisions are based on user behavior, not internal opinions.

- Transparency: Progress and risks are visible to the whole team.

- Flexibility: The backlog can be reprioritized based on new learning.

The Framework for Validation 🔄

Implementing rapid iterations requires a structured approach. You cannot simply “build fast” without direction. You need a framework that guides your decision-making. The Build-Measure-Learn loop is the core engine of this process. It ensures that every piece of code written contributes to learning about the market.

1. The Build Phase: Defining the MVP

Minimum Viable Product (MVP) is often misunderstood. It does not mean a broken product. It means the smallest set of features required to test your core value proposition. When designing the MVP for PMF validation, ask yourself: What is the one thing this product must do to be useful? Remove everything else.

- Focus on the core user journey.

- Avoid “nice-to-have” features that delay testing.

- Ensure the technical foundation is stable enough to gather data.

2. The Measure Phase: Data Collection

Once the iteration is live, the focus shifts to measurement. You need to know if users are engaging with the product in the way you predicted. This involves setting up tracking mechanisms. You must define what success looks like before you start the iteration.

- Set clear goals for the sprint.

- Implement analytics to track user flows.

- Collect qualitative feedback through direct interaction.

3. The Learn Phase: Analysis and Pivot

At the end of the iteration, the team reviews the data. Did the users adopt the feature? Did they stick around? If the metrics are below the target, the team must decide whether to persevere or pivot. This decision is based on evidence, not emotion.

Key Metrics for PMF Validation 📊

Not all metrics are created equal. Vanity metrics, such as total downloads or page views, can look good but do not indicate true value. To validate PMF, you need engagement and retention metrics. These numbers tell you if users are deriving value from your product.

Quantitative Metrics

- Retention Rate: The percentage of users who return to the product over time. High retention is a strong signal of fit.

- Churn Rate: The rate at which users stop using the product. Low churn indicates satisfaction.

- Active Users: Daily or monthly active users (DAU/MAU). This shows how integrated the product is in their routine.

- Conversion Rate: The percentage of users who complete a desired action, such as signing up or purchasing.

Qualitative Metrics

- User Interviews: Direct conversations to understand pain points and satisfaction.

- Net Promoter Score (NPS): A measure of how likely users are to recommend the product.

- Customer Satisfaction (CSAT): Feedback on specific interactions or features.

Designing the Iteration Cycle ⚙️

How do you structure the work itself? The iteration cycle should be consistent. This creates a rhythm for the team and allows for predictable delivery of value. Below is a breakdown of how a standard validation sprint might look.

| Phase | Duration | Key Activities |

|---|---|---|

| Planning | 1-2 Days | Select hypotheses, define metrics, assign tasks. |

| Development | 1-2 Weeks | Code features, conduct usability tests, fix bugs. |

| Release | 1 Day | Deploy to a subset of users, monitor stability. |

| Review | 1-2 Days | Analyze data, gather feedback, plan next iteration. |

This structure ensures that you are constantly moving forward. It prevents the team from getting stuck in endless planning or development without validation. The review phase is critical. It is where the learning happens.

Gathering Qualitative vs Quantitative Data 🗣️

Numbers tell you what is happening, but they do not explain why. To truly understand product market fit, you need both quantitative and qualitative data. Quantitative data provides the scale, while qualitative data provides the depth.

The Role of Qualitative Data

- Context: Numbers show a drop-off in a funnel, but interviews explain why users dropped off.

- Emotion: Users can express frustration or delight in their own words.

- Unmet Needs: Users might suggest features you had not considered.

During each iteration, schedule time for user interviews. Do not rely solely on automated tracking. Speak to users who used the feature and those who did not. Ask open-ended questions. What was their goal? Did they achieve it? What stopped them?

The Role of Quantitative Data

- Validation: Large sample sizes confirm if a qualitative finding is widespread.

- Trends: Long-term data shows if changes in the product lead to sustained growth.

- Efficiency: Automated tracking provides real-time insights without manual effort.

Balance your time between these two. If you only look at numbers, you may optimize for the wrong things. If you only listen to users, you may build features that only a few want.

Pitfalls to Avoid in Agile Validation 🚧

Even with a good framework, teams can stumble. There are common mistakes that prevent effective validation. Recognizing these pitfalls early can save significant time and resources.

1. Confirmation Bias

It is easy to interpret data in a way that supports your beliefs. If you want a feature to succeed, you might ignore signals that it is failing. You must remain objective. If the data says no, listen to the data.

2. Feature Creep

As the product evolves, there is pressure to add more functionality. This dilutes the focus of the MVP. Stick to the core value proposition. If a new idea comes up, add it to the backlog for a later iteration.

3. Ignoring Negative Feedback

Users are often more vocal about what they hate than what they love. Ignoring complaints is a missed opportunity. Negative feedback is often the most valuable input for improvement.

4. Moving Too Slowly

Agile is about speed. If your iterations take too long, you lose the advantage of rapid learning. Keep cycles short enough to allow for quick pivots if necessary.

5. Focusing on Acquisition Over Retention

It is tempting to focus on getting new users. However, if existing users are not staying, new users will not help. Focus on retention first. A leaky bucket will not fill up no matter how much water you pour in.

Team Dynamics and Collaboration 👥

Validating PMF is not just a product manager task. It requires cross-functional collaboration. Developers, designers, and marketers must all be aligned on the validation goals.

- Developers: Must understand the “why” behind the feature to build it correctly.

- Designers: Need to create experiences that facilitate user feedback.

- Marketing: Must help reach the right users for testing.

Regular syncs are essential. The team should discuss progress, blockers, and insights. This ensures everyone is working toward the same definition of success. A siloed team will struggle to adapt quickly to market feedback.

Scaling After Validation 📈

Once you have validated product market fit, the strategy shifts. You are no longer testing hypotheses. You are scaling what works. This means optimizing for efficiency and growth rather than exploration.

- Invest in infrastructure to handle increased load.

- Refine the onboarding process to improve activation.

- Expand marketing channels to reach a broader audience.

- Begin building features that deepen engagement and loyalty.

Do not abandon the agile mindset. Continue to iterate on the product to maintain fit as the market evolves. What works today may not work tomorrow. Continuous improvement is the key to long-term success.

Conclusion 🏁

Validating product market fit using rapid agile iterations is a disciplined process. It requires a commitment to learning and a willingness to change direction based on evidence. By breaking work into small cycles, measuring the right metrics, and listening to users, teams can reduce risk and increase the likelihood of building a successful product.

The path to product market fit is rarely linear. It involves trial and error. However, with a structured agile approach, you can navigate this uncertainty with confidence. Focus on the value you provide to users, not the features you build. When users love your product, the business will follow.